Shading Annotations in the Wild

Balazs Kovacs,

Sean Bell,

Noah Snavely,

Kavita Bala

Cornell University

Computer Vision and Pattern Recognition (CVPR) 2017

Abstract:

Understanding shading effects in images is critical for a variety of vision and graphics problems, including intrinsic image decomposition, shadow removal, image relighting, and inverse rendering. As is the case with other vision tasks, machine learning is a promising approach to understanding shading - but there is little ground truth shading data available for real-world images. We introduce Shading Annotations in the Wild (SAW), a new large-scale, public dataset of shading annotations in indoor scenes, comprised of multiple forms of shading judgments obtained via crowdsourcing, along with shading annotations automatically generated from RGB-D imagery. We use this data to train a convolutional neural network to predict per-pixel shading information in an image. We demonstrate the value of our data and network in an application to intrinsic images, where we can reduce decomposition artifacts produced by existing algorithms. Our database is available at http://opensurfaces.cs.cornell.edu/saw/.

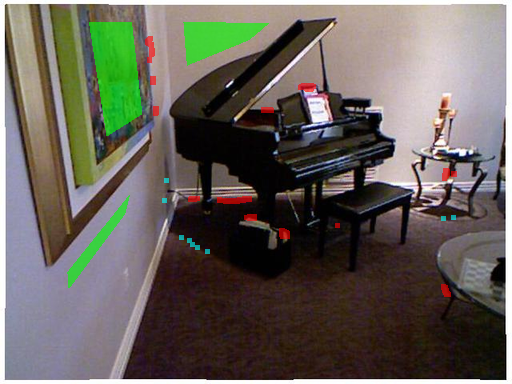

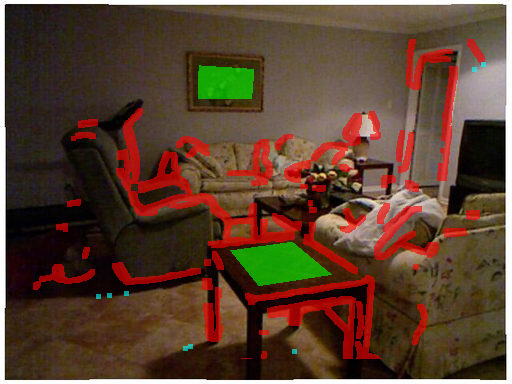

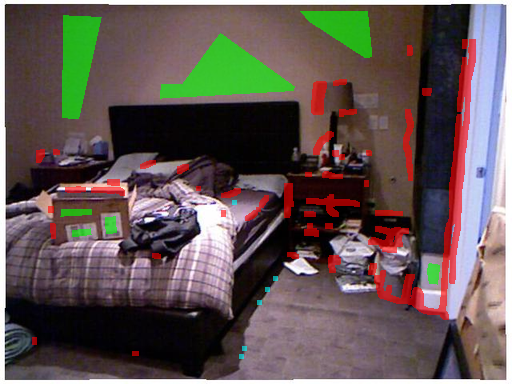

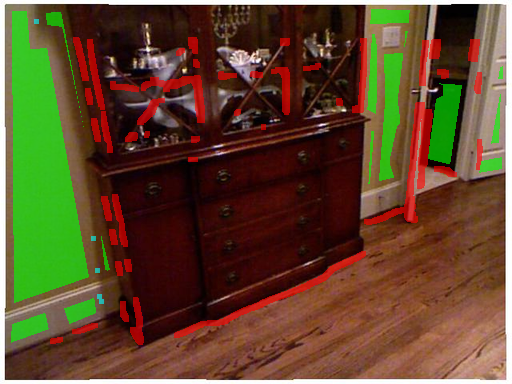

On the right:

Examples of annotations in the SAW dataset. Green indicates regions of near-constant shading (but with possibly varying reflectance). Red indicates edges due to discontinuities in shape (surface normal or depth). Cyan indicates edges due to discontinuities in illumination (cast shadows). Using these annotations, we can learn to classify regions of an image into different shading categories.

BibTeX:

@article{kovacs17shading,

author = "Balazs Kovacs and Sean Bell and Noah Snavely and Kavita Bala",

title = "Shading Annotations in the Wild",

journal = "Computer Vision and Pattern Recognition (CVPR)",

year = "2017",

}Code and Data

We included a script which downloads and unzips the whole dataset. It also includes the list of files and sizes which will be downloaded. See the Github repository for details.

Code and data (Github repository)

License

The annotations are licensed under a Creative Commons Attribution 4.0 International License. The photos have their own licenses.

Acknowledgement

This work was supported by the National Science Foundation (grants IIS-1149393, IIS-1011919, IIS-1161645, IIS-1617861), and by the Google Faculty Research Award.

Header background pattern: courtesy of Subtle Patterns.